Reach-to-Grasp

Imagine that you are reaching into the fridge to grasp an object you can only partially see. Rather than relying solely on vision, you must use touch in order to find out where the object is and grasp it.

However, humans would not poke the object to localise it, as the majority of proposed approaches for robots. We compensate for the uncertainty by approaching the object in such a way that if a contact occurs it will generate a good deal of information about where the object is and, with a minimum adaptation of the initial trajectory, the object will be grasped.

Prediction of Motion

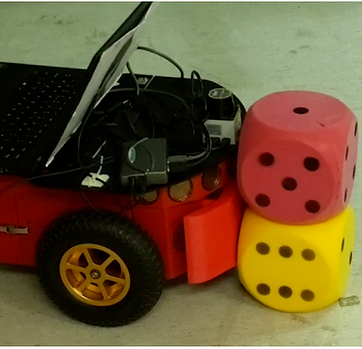

Being able to make predictions on how an object will behave under a manipulative task is a vital ability for a robot. We present a novel approach to make robust predictions on novel objects.

We proposed that, by conditioning predictions on local surface features, we can achieve generalisation across objects of different shapes.

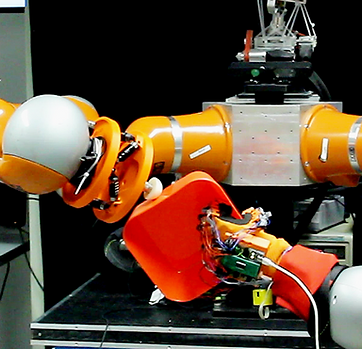

Intelligent Semi-Autonomous Robots for Hazardous Environments

Designing robotic assistance devices for manipulation tasks is challenging. This work is concerned with improving accuracy and usability of semi-autonomous robots, such as human operated manipulators or exoskeletons. The key insight is to develop a system that takes into account context- and user-awareness to take better decisions in how to assist the user.

MultiSensory Integration for Sensory Prediction

Our sensorimotor system maps sensory inputs into motor commands. It allows us to learn how to interact with the environment by learning features that can be expressed from multiple senses (e.g. knowing that there is a car because you see the car or you hear the engine). This enables us to be robust on missing/corrupted data (e.g. realise that there is a car behind us). Additionally, our internal model allows us to make predictions over the next sensory input state, which allows us to understand when to adapt to unexpected situations.

A robot needs a sensorimotor system to play the drum!

Hypothesis-based Belief Planning

The belief space planning approach is a viable alternative to formalise partially observable control problems and, in the recent years, its application to robot manipulation problems has grown. However, this family of approaches have only been demonstrated in simplied control problems.

We apply belief space planning to the full problem of planning dexterous reach-to-grasp trajectories under object pose uncertainty.

Active Exploration

Information on object shape is a fundamental parameter for robot-object interaction to be successful. However, incomplete perception and noisy measurements impede obtaining accurate models of novel objects. Especially when vision and touch simultaneously are envisioned for learning object models, a representation able to incorporate prior shape knowledge and heterogeneous uncertain sensor feedback is paramount to fusing them in a coherent way. Moreover, by embedding a notion of uncertainty in shape representation allows to more effectively bias the active search for new tactile cues.

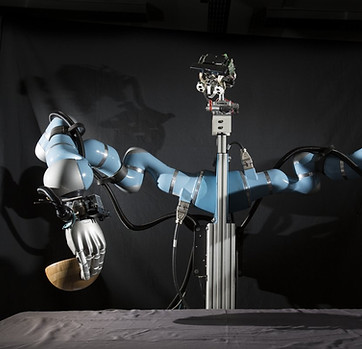

Intelligent Assistant for Wearable Robotics

Wearable robot (WR) are mechanical devices worn by human subjects with the purpose of assisting, supporting or substituting motor functions (e.g. exoskeletons or prostheses).

We aim to investigate and develop an intelligent, semi-autonomous robotic system to support patients in using their upper-limb prosthetics.

Today, the number of UK upper limb amputees is more than 1% of the population. However, more than 55% of them decided to abandon the use of a prosthesis claiming that the functionality expectations were not enough compared to the psychological/physical overload required. As a result, a typical patient will keep refusing to use a dexterous prosthetic if his/her effort to learn how to use it overcomes the benefits.

Our approach is to model the system as a two agents collaborative system, where the patient and the prosthetics achieve a symbiotic relationship. This will be achieved bringing the autonomous intelligence typical of robots to prosthetics.